Valentin Heun is a VP of Innovation Engineering at PTC where he is leading the PTC Reality Lab. His research focuses on new computer interaction methods for the physical space. His works have been published in academic conferences such as Ubiquitous Computing (UBICOMP, 2012, 2013), SIGGRAPH Asia (SA, 2012), Tangible Embodied and Embedded Interaction (TEI, 2013), Computer-Human Interaction conference (CHI, 2013) and User Interface Software and Technology (UIST, 2014). He has been interviewed by online media outlets such as Fast Company, Vice, Verge, Wired, Core77, PSFK, the Daily Dot, Stylepark, Makezine, and Boston Globe; received the 2012 SIGGRAPH Asia Emerging Technologies Prize, was awarded by Wired UK to the Smart List 2013, Postscapes 2016 Editors Choice Award for IoT Software & Tools, a finalist for the Fast Company’s 2016 Innovation by Design Award and his work was named by Fast Company as Boldest Ideas in User Interface Design 2015. Valentin holds a Ph.D. from the MIT Media Lab and the German Diplom in Design from the Bauhaus-University.

Will Ferrer, Corporate Strategy Analyst, co-authored this post with David Immerman, Senior Research Analyst

The question “What is spatial computing?” comes into play as more and more industrial enterprises look to digitally transform their businesses – particularly those that involve front-line workers and operate in the physical world, such as a factory, warehouse, or a worksite.

The term spatial computing was defined by MIT Media Lab alumni Simon Greenwold in his very futuristic thesis in 2003. However, we are only recently able to make his thesis and vision possible, due to advances in these technologies: artificial intelligence (AI), camera sensors, and computer vision that track environments, humans and objects, Internet of Things (IoT) that monitors and controls products and assets, and augmented reality (AR) that provides the human user interface.

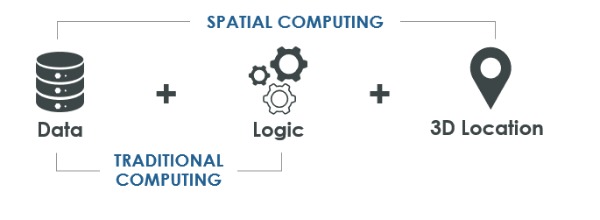

At its highest level, spatial computing is the is the virtualization of activities and interactions between machines, people, objects, and the environments in which they take place to enable and optimize actions and interactions. In other words, it adds knowledge of relative location, i.e. location with respect to other locations, to expand the concept of ‘traditional computing’. For example, an autonomous vehicle utilizes GPS, LiDAR, volumetric camera sensors and other technologies to triangulate its precise location and measure its proximity to objects in the driving environment.

The prevalence of automation, as well as machines working alongside humans, is increasing globally. Spatial computing will unlock synchronized operations between these humans and machines, as they work side by side. It is the ultimate way to optimize entire worksites occupied by humans and machines, including coordinating the work of every machine and worker involved in the process.

Spatial computing enables coequal collaboration between humans and machines, but it also enhances each individually. We’ll unpack the important components of this concept, with existing and future examples illustrating its potential.

Spatial computing advances already intelligent machines

Automated warehouse operations are cutting-edge examples of spatial computing. The “Amazon effect”, which drives e-commerce sales and consumer expectation for next day delivery, has transformed retail warehouses. They must rapidly move goods to quickly fill tens of thousands of daily customer orders.

The Autonomous Guided Vehicles (AGV) in these autonomous operations are constantly processing location, relative location, and speed. These real-time questions define where and relative to what is the AGV now, where was it recently and where is it going to be next, and how fast is it currently moving. Spatial computing across this dynamic 3D environment manages and optimizes a fleet of AGVs for autonomous fulfilment, utilizing their relative location to one another, proximity to the target goods, and destination points to ultimately get the right good to right place in the most efficient manner possible.

A use case doesn’t have to be as sophisticated as a highly autonomous warehouse to be considered spatial computing. Smart vacuums like Roomba use analogous spatial technologies to navigate your living spaces.

Empowering humans through spatial capabilities

Human-machine interfaces are evolving for workers to participate in this emerging spatial computing arena. For example, how workers interact with the physical systems common in industrial environments like factories, can be bolstered with spatial computing.

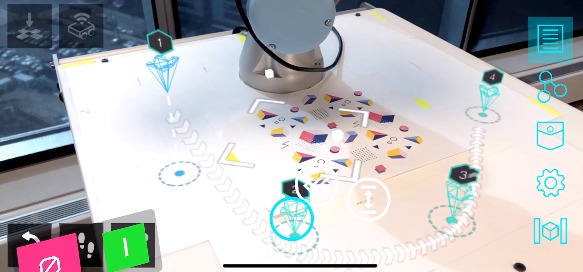

Reprogramming robots in real-time

Many robotics on a production line require reprogramming when they need to perform a new task. This timely process can require line stoppages and specialized engineers for software updates, creating costly impacts on machine setup and changeover times and other critical manufacturing KPIs.

With spatial computing, in-context robotic reprogramming could allow a human operator to more simply understand, control, and plan its movements for new tasks with minimal downtime and without specialized engineering knowledge. This simple and visual programming with customizable logic flows brings the robots movements into the workers field-of-view, potentially enabling more collaborative forms of work. This could include a mobile operator designating waypoints for a mobile cobot to carry a heavy load across a factory, a strenuous and unsafe task for humans.

Spatial analytics for workforce productivity

Factories and industrial plants constantly seek to optimize the workflows and movements of their hundreds of employees. Seventy-one percent of manufacturers say manual time and motion studies are important for this workforce optimization, yet 43% are not confident in the data they yield.

Leveraging spatial analytics for continuous process improvement can more accurately and readily identify worker and production bottlenecks than manual or paper methods. This insight could be critical for reconfiguring production capacity and manufacturing processes to improve labor efficiencies, increase safety and productivity, and even introduce new products to market more expediently.

As we can see, AR/MR is the perfect user interface to engage with spatial computing, because it enables humans to visualize data in physical context. However, it is critical to note that not all AR/MR is spatial computing, because not all AR/MR solutions utilize location data.

Final thoughts

These are a few among many potential use cases in which spatial computing will drive business value across industrial companies’ products, processes, people, and places. With fully three-quarters of the global workforce doing front-line work and investments in facilities and equipment that can involve billions of dollars, the opportunity for optimizing business processes is enormous.

While still early days, spatial computing will come into purview for industrial companies as the enabling technologies like IoT, AR/MR, and AI become more widely adopted and new sources of location data are captured and utilized.

PTC is actively exploring, innovating, and creating solutions around spatial computing at the PTC Reality Lab, our research group dedicated to examining the next disruptive technologies for industrial companies. For those ready to get started today, download Vuforia Spatial Toolbox, an open source offering we’ve created to allow developers, innovators, and researchers to begin experimenting with spatial computing within their own companies.

Image Credit